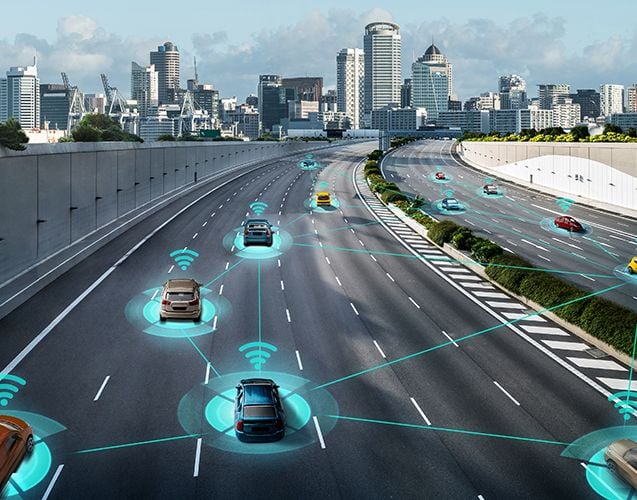

This paper investigates the task management for cooperative mobile edge computing (MEC), where a set of geographically distributed heterogeneous edge nodes not only cooperate with remote cloud data centers but also help each other to jointly process tasks and support real-time IoT applications at the edge of the network. Especially, we address the challenges in optimizing assignment of the tasks to the nodes under dynamic network environments when the task arrivals, node computing capabilities, and network states are non-stationary and unknown a priori. We propose a novel stochastic framework to model the interactions of the involved entities, including the edge-to-edge horizontal cooperation and the edge-to-cloud vertical cooperation. The task assignment problem is formulated and the algorithm is developed based on online reinforcement learning to optimize the performance for task processing while capturing various dynamics and heterogeneities of node computing capabilities and network conditions with no requirement for prior knowledge of them. Further, by leveraging the structure of the underlying problem, a post-decision state is introduced and a function decomposition technique is proposed, which are incorporated with reinforcement learning to reduce the search space and computation complexity. The evaluation results demonstrate that the proposed online learning-based scheme outperforms the state-of-the-art benchmark algorithms.

A Machine Learning-Based Task Assignment Framework for Cooperative Mobile Edge Computing

Related INSIGHTS

Explore the latest research and innovations in wireless, video, and AI technologies.

WHITE PAPER

Bridge to 6G: Spotlight on 3GPP Release 20

“Bridge to 6G: Spotlight on 3GPP Release 20”, authored by ABI Research and commissioned by InterDigital, explores how 3G...

WHITE PAPER

Media Over Wireless: Networks for Ubiquitous Video

Media over Wireless: Networks for Ubiquitous Video explores the escalating demands and trends around consumer behavior f...

BLOG POST

OFCOM recognizes InterDigital Europe in Next Generation Mobi...

BLOG POST

InterDigital’s Jim Miller talks LTE unlicensed and Wi-Fi wit...

BLOG POST

InterDigital participated in IEEE ComSoc 5G Rapid Reaction S...

BLOG POST