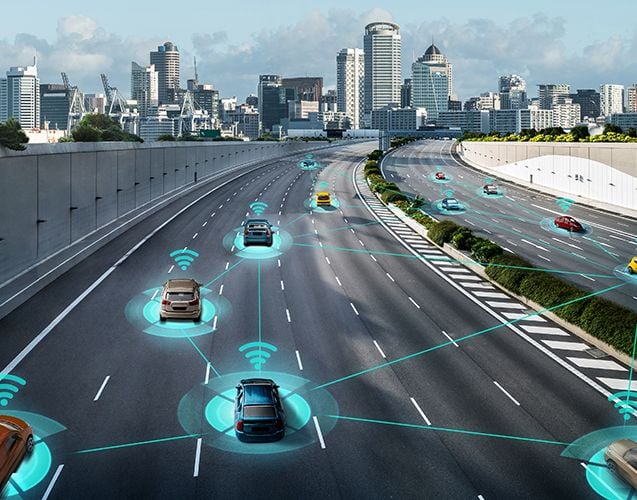

MEC and other edge computing initiatives address the need to place processing and storage where appropriate, whether a central location or the network’s edge, depending on factors such as applications, traffic type, network conditions, subscriber profile, and operator’s preference. In this InterDigital sponsored report, RCRWireless explores the evolution of the edge’s role in fixed and mobile networks and how it may impact network optimization, value-chain roles and relationships, business models, usage models and, ultimately, the subscriber experience.

Power at the edge: Processing and storage move from the central core to the network edge

May 2017

Learn more and download

Learn more and download

Related INSIGHTS

Explore the latest research and innovations in wireless, video, and AI technologies.

BLOG POST

How InterDigital is Leading the Path to 6G

WHITE PAPER

Bridge to 6G: Spotlight on 3GPP Release 20

“Bridge to 6G: Spotlight on 3GPP Release 20”, authored by ABI Research and commissioned by InterDigital, explores how 3G...

BLOG POST

Addressing our Carbon Handprint with Sustainable Innovation

WHITE PAPER

Media Over Wireless: Networks for Ubiquitous Video

Media over Wireless: Networks for Ubiquitous Video explores the escalating demands and trends around consumer behavior f...

BLOG POST

Driving the Future: ISAC’s Potential for Connected Vehicles

BLOG POST